Hi~ I’m reading the blog about columnar storage engine in TiFlash (https://pingcap.com/zh/blog/how-tidb-implements-columnar-storage-engine), the author says they tried to implement columnar storage using LSM-Tree but its read performance is not as good as Delta-Tree, I wonder what engineers working on TiFlash have done with LSM-Tree (optimization, reorganization or other design) to implement columnar storage. Is there any documentation I can achieve?

TiFlash Blog about Column Storage Implementation Using LSM-Tree

Great question.

As you may know that the code base of TiFlash is based on ClickHouse. So the first version of the columnar storage engine in TiFlash is MergeTree, the storage engine of ClickHouse.

MergeTree has a LSM-Tree like structure, and uses Size Tired Compaction strategy.

Here are some efforts we tried on MergeTree to make it more suitable for TiDB’s HTAP workload.

- Since we need to guarantee strong consistency based on MVCC model, we added 3 columns: PK, Version(the commit_ts in TiDB), IsDeleted (upsert or delete). And for each read, we need to merge all related files together.

- We found that the more files, i.e. more levels in LSM-Tree, the slower the read speed. Even though many clever tricks are used. So we have to do full compactions on the whole table data after some kind of threshold. It solved some problems with huge drawbacks: the performance is unstable and the write amplification is large.

- Then we realize that the better way to solve the “too many levels” problem is splitting the LSM-Tree into smaller ones. So that each small LSM-Tree has limited data and limited files. The read speed is better and more stable.

- Many small LSM-Tree means many individual flush operations. Note that for write throughput, we don’t have a WAL file to provide durability. Because the raft log in the raftstore already provide durability before the data is actually written into TiFlash’s columnar storage engine. So the flush operations become more frequent. And the result is many small files. Small files are bad for read performance.

- To solve the “many small files” problem, we made two optimizations: 1. use an object storage to store those small files; 2. do small compaction constantly on them.

- We found that merge is still slowing down the read performance, even though the related files are less. It is really a pain on the ass. To resolve it once and for all, we created a structure called DeltaIndex to eliminate the expensive key comparing processing, and to speed up data copy(by batch copy).

- And now we have a DeltaTree!

And sadly we don’t have further blogs about DeltaTree. And there is a plan to make more after we OPEN SOURCE TiFlash.

Thanks for your detailed reply and now I have a clear idea about the development of TiFlash.

The mechanisms LSM-Tree designed for write optimization actually slow down its read performance and make it not very suitable for columnar storage.

It seems like a general (and popular) storage architecture for HTAP is a Key-Value based storage engine for OLTP and a file-based storage engine for OLAP workloads just like TiKV and TiFlash in TiDB. I really wonder what makes it not a good choice to implement column storage based on Key-Value storage, is the poor read performance of LSM-Tree?

In fact, I’m very open to implementing a columnar storage engine based on K-V storage. And Rockset seems made a very good example. The outcome of Rockset looks promising, and I really like the flexibility of its data model. The main concern is that the read performance is slowed down by the K-V API, and the extra steps of converting K-V data into column format data.

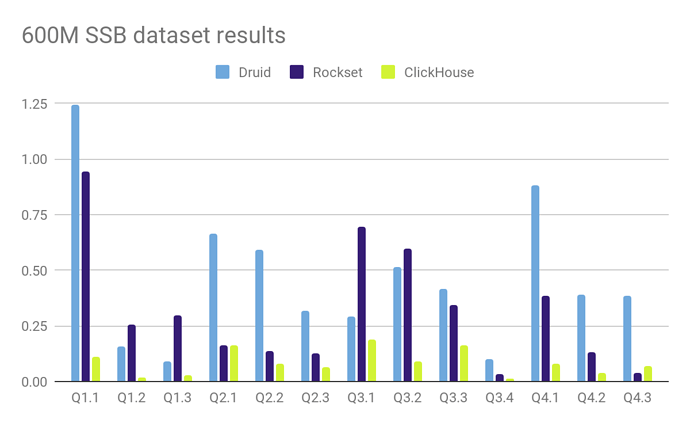

Here is an example. The performance between ClickHouse (column native) and Rockset:

And note that the Altinity guy used a flattened mode(because ClickHouse runs join poorly). We only consider the result of Q1, as both ClickHouse and Rockset don’t need to do joins, which is fair enough.